Large language models (LLMs) have emerged as a revolutionary form of artificial intelligence, capable of generating text, translating languages, creating creative content, and providing informative responses to questions. However, their immense power also brings forth security challenges that enterprises must address. This blog explores the multifaceted world of LLMs in the context of security, addressing the challenges they present and offering strategies for mitigating risks.

Fig.1 Enterprise LLMs end up on the change and variability matrix

Large Language Models (LLMs), like GPT, have gained significant attention in recent years due to their remarkable language generation capabilities. However, they also pose unique security challenges for enterprises that deploy them.

1. Data Privacy and Confidentiality

Large Language Models (LLMs) like GPT-3 require extensive amounts of training data to learn language patterns and generate coherent text. This training data often includes diverse sources such as text from the internet, books, articles, and other text-based content.

Fig.2 Data privacy and confidentiality

However, some critical considerations related to data privacy and confidentiality are essential for enterprises using LLMs:

- Sensitive Information Exposure: There’s a risk of exposing confidential business plans, customer data, financial records, or proprietary content when feeding data into an LLM.

- Compliance with Data Protection Regulations: Enterprises must ensure that the use of LLMs complies with data protection regulations like GDPR or HIPAA. Failing to do so can result in severe legal and financial consequences.

- Data Anonymization and Redaction: Before using sensitive data with LLMs, enterprises should consider anonymizing or redacting it to protect individuals’ privacy.

- Secure Data Storage: Storing training data securely is crucial. Enterprises should use secure data storage solutions with access controls, authentication, and encryption to protect the confidentiality of their data.

- Access Controls and Permissions: Limit access to the training data to only authorized personnel who need it for legitimate purposes. Implement strict access controls and permissions to prevent unauthorized access.

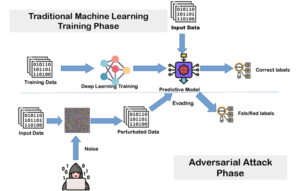

2. Adversarial Attacks

Fig.3 Adversarial Attack

Adversarial attacks refer to attempts by malicious actors to manipulate the behavior of LLMs for harmful or deceptive purposes. While LLMs are powerful tools, they can be vulnerable to these attacks, which can have significant consequences for enterprises. Here’s a detailed exploration of this point:

- Implications for Enterprises: Adversarial attacks can harm an enterprise’s reputation, disrupt operations, and lead to legal liabilities. For example, if an LLM is tricked into generating defamatory content about a competitor, it could result in a costly legal battle.

- Mitigation Strategies: Enterprises need robust mitigation strategies to defend against adversarial attacks on LLMs like Input Validation, Adversarial Training, Content Moderation, Threat Intelligence, Human Review, Behavioral Analysis.

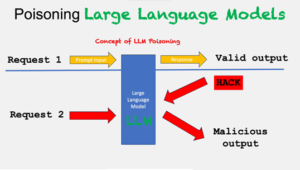

3. Model Poisoning

Model poisoning is an attack method aimed at manipulating the output of machine learning models, particularly large language models (LLMs), to generate harmful, biased, or inaccurate text. Attackers achieve this by introducing tainted data into the model’s training dataset, causing it to produce undesirable outputs.

Fig 4. LLM Poisoning

To execute a model poisoning attack, the attacker must gain access to the LLM’s training data and insert malicious data into it. For example, they could corrupt a customer support LLM with offensive content, leading it to generate harmful responses.

These attacks pose a significant security threat to LLMs, and safeguarding against them involves:

- Secure Training Environment: Isolate the LLM’s training environment from the network to prevent unauthorized access to training data.

- Robust Data Validation: Thoroughly validate the training data to eliminate poisoned data using methods like text filtering and machine learning algorithms.

- Output Monitoring: Continuously monitor the LLM’s output for anomalies using techniques such as anomaly detection and natural language processing to detect and mitigate potential attacks.

4. Access Control

Access control in LLM security in enterprises refers to the process of restricting who can access, use, and modify large language models (LLMs). This is important because LLMs can be used to generate sensitive content, such as financial data, medical records, and intellectual property. If an unauthorized user gains access to an LLM, they could use it to create counterfeit documents, steal personal information, or launch cyberattacks.

There are a number of different access control models that can be used to secure LLMs. Some of the most common models include:

- Role-based access control (RBAC): This model assigns users to different roles, and each role has a set of permissions associated with it.

- Attribute-based access control (ABAC): This model allows permissions to be granted based on attributes of the user, such as their job title, department, or location.

- Mandatory access control (MAC): This model assigns users to security clearance levels, and each security clearance level has a set of permissions associated with it.

The best access control model for an enterprise will depend on the specific needs of the organization. However, all organizations should implement some form of access control to protect their LLMs from unauthorized access.

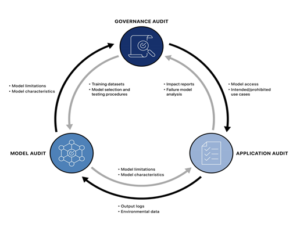

5. Monitoring and Auditing

To enhance LLM security in enterprises, monitoring and auditing can be employed in various ways:

- Monitor network traffic for unusual patterns, such as unexpected spikes or unconventional routing.

- Analyze system logs for signs of unauthorized access, like failed login attempts or access to sensitive files.

- Track user behavior for anomalies, such as accessing LLM from unauthorized locations or altering its configuration.

- Review and update security policies and procedures, including password management and incident response.

- Test security devices like firewalls, intrusion detection systems, and antivirus software to verify their functionality.

- Conduct penetration tests to identify and address security vulnerabilities, preventing potential exploitation by attackers.

Fig 5. Monitoring and Auditing

By implementing a comprehensive monitoring and auditing program, organizations can significantly improve the security of their LLMs. This will help to protect their data and systems from unauthorized access, misuse, and disruption.

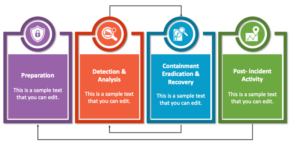

6. Incident Response Plan

An incident response plan (IRP) is a document that outlines the steps an organization will take to identify, contain, and recover from a security incident.

It should be tailored to the specific needs of the organization, but should generally include the following phases:

- Preparation: This phase includes activities such as identifying assets, threats, and vulnerabilities; creating a team of incident responders; and developing communication and documentation procedures.

- Detection and analysis: This phase involves identifying and analyzing a security incident. This may involve monitoring logs, reviewing security alerts, and interviewing affected employees.

- Containment, eradication, and recovery: This phase involves taking steps to contain the incident, eradicate the threat, and recover from the damage. This may involve isolating affected systems, removing malware, and restoring data from backups.

- Post-incident activity: This phase includes activities such as reviewing the incident, improving the IRP, and communicating with stakeholders.

Fig 6. Incident Response Plan

Large language models (LLMs) can be used to automate some of the tasks involved in incident response, such as threat detection and analysis. This can help to improve the speed and efficiency of the response process, and can help to reduce the impact of a security incident.

The integration of Large Language Models (LLMs) into an enterprise’s operations offers unparalleled opportunities for innovation, efficiency, and productivity. However, it also presents a host of security challenges that cannot be ignored. A robust security strategy is essential to harness the power of LLMs while safeguarding sensitive data, maintaining ethical standards, and protecting against emerging threats.

References

https://blog.metamirror.io/enterprise-security-in-an-llm-world-fa07a232bbf

https://arxiv.org/pdf/2302.08500.pdf

https://www.collidu.com/presentation-cyber-incident-response-plan

https://www.linkedin.com/pulse/llm-data-privacy-safeguarding-confidentiality-model-brindha-jeyaraman